The Turing Machine

Mathematical Creatures and Where to Find Them, 3a

What is a computer?

If I were to ask myself what a computer is, off the top of my head, I’d probably say something like: "a machine that can compute." To the obvious follow-up question—“What does it mean to compute?”—I’d add: "doing operations, mostly (but not necessarily) mathematical ones." This isn’t a rigorous definition, but I think it works well enough for our current purpose.

Fun fact: until relatively recently, computers were people. Just as weavers wove and scribes scribed, computers were hired to perform (usually tedious and lengthy) arithmetic or mathematical calculations. With the rise of astronomy, navigation, ballistics, engineering, and later finance and insurance, the demand for accurate numerical tables—like those for logarithms, planetary motion, or artillery trajectories—skyrocketed. To produce and check these tables, institutions assembled teams of human computers, often in gendered, assembly-line-style setups: each person would compute a portion of the data, and others would check or combine the results. In our more race- and gender-conscious age, you might have heard of Katherine Johnson, Dorothy Vaughan, and Mary Jackson; they were Black women computers at NASA in the 1960s who calculated trajectories for space missions.

Computers raise two major kinds of questions. One is mathematical: it concerns the formal processes by which problems are solved using algorithms—finite, step-by-step procedures for solving a well-defined problem—along with questions about what can be computed, how efficiently, and under what constraints. The other is more of an engineering challenge: how to build a mechanical contrivance that can perform those computations, ideally faster, more reliably, or more powerfully than a human.

Let’s start with the second one.

Calculus ex machina

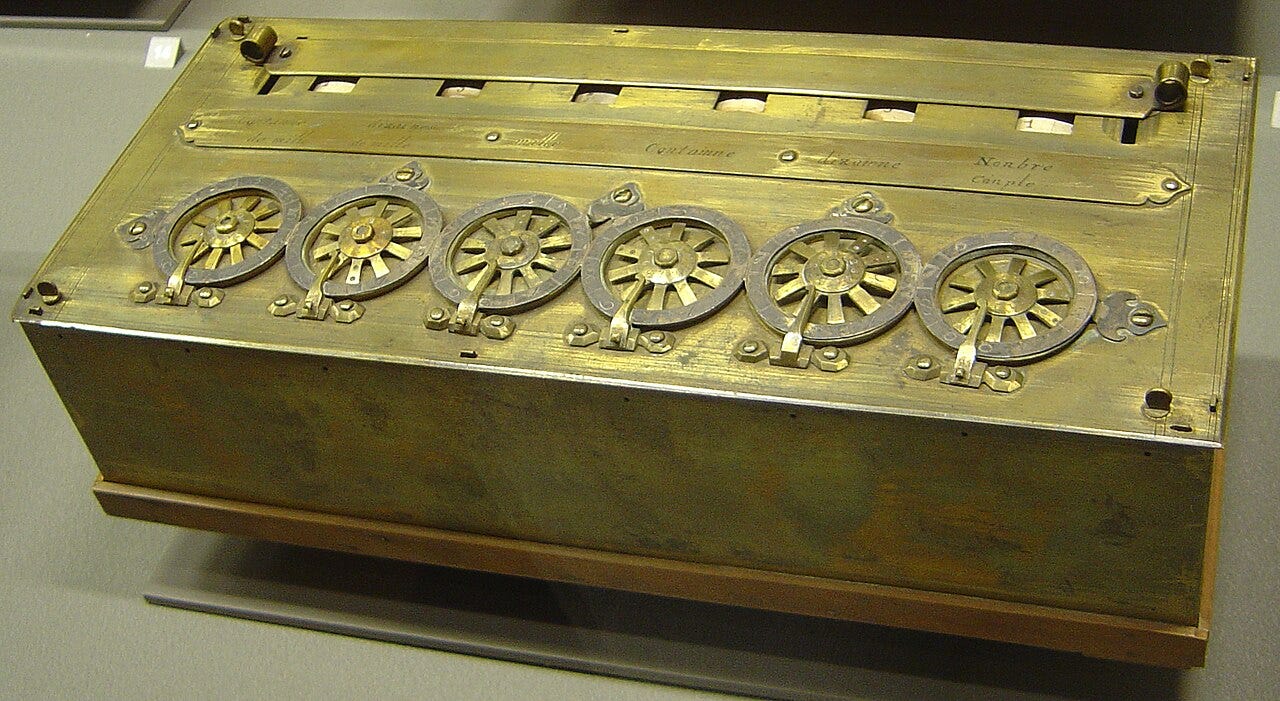

Most people think of computers and calculators as a 20th century phenomenon, but the story, as always, is more complicated. ‘Mechanical calculators’ of a sort can probably be traced back as far as Ancient Greece (look up the Antikythera Mechanism, which was designed to predict astronomical events). In the 17th century, Blaise Pascal built the Pascaline to help his tax-collector father: although unreliable and of limited functionality, it was a machine that could perform addition and subtraction using geared wheels. And a little bit latter, Newton’s arch-rival, Gottfried Wilhelm Leibniz, invented the Stepped Reckoner, which could multiply and divide (when you got it to work, which was seldom).

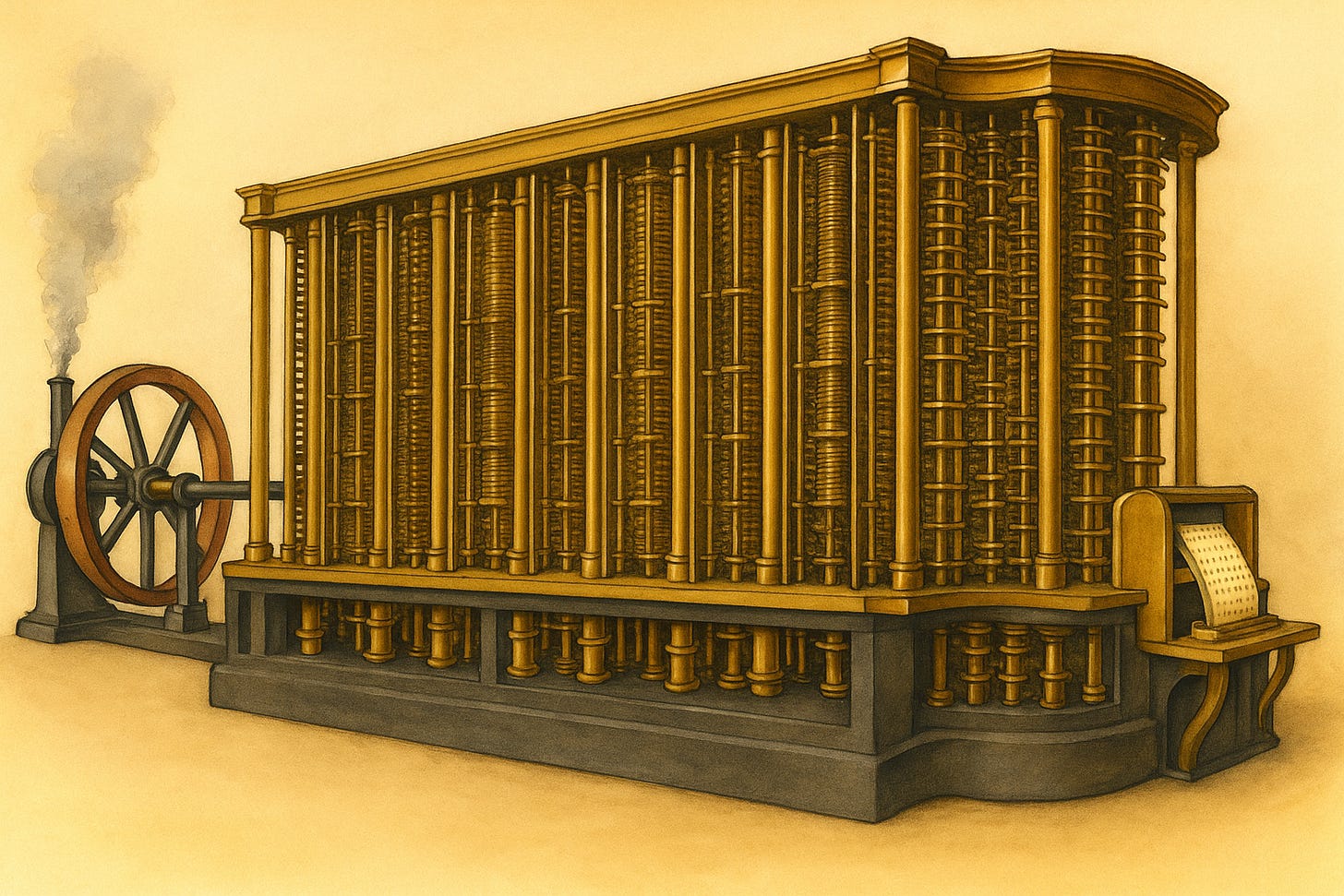

The next big step forward (although more theoretical than practical) comes from the work of Charles Babbage (1791 - 1871). He designed and (unsuccessfully) tried to build two computing machines: the first was the Differential Engine, which is an example of a single-purpose computer. It would have been an engine capable of computing polynomial functions using the method of finite differences—a clever numerical technique that avoids the need for multiplication or division. When built, it would have been able to calculate logarithmic and trigonometric tables. It could only be used for those specific things though, which severely limited its usefulness; this gave Babbage an idea for the second of his machines: one that could be programmed, i.e., you would be able to use it for different things. And this was meant to have been the Analytical Engine.

It pays to take a look at what this behemoth would have looked like: the complete version would have stretched 30 meters long and 10 wide, mostly made of brass, steel, and iron, and powered by steam (I mean, how much cooler can it get than that?). In many ways, it resembles a retrofuturistic ancestor of our modern computers: it was general-purpose, and included all the elements we now take for granted—an arithmetic logic unit, control flow with conditional branching and loops, and integrated memory. That memory could have stored up to 1,000 40-digit numbers, which the machine could retrieve and process for mathematical operations. Programs were input via punched cards, and its outputs included a printer, a curve plotter, and even a bell.

Alas, it was never built—but it lives on rent-free in the minds of many. In fact, you could say it helped inspire an entire subgenre of speculative fiction: Steampunk, with its "what-if" alternate histories where 20th-century breakthroughs arrive earlier, clad in the brass and vapor of the steam age. William Gibson and Bruce Sterling even wrote a novel titled The Difference Engine, named after Babbage’s (mental) creation.

In the end, machines like those envisioned in earlier centuries did get built—only now powered by electricity, and driven by wartime urgency. During World War II, some were used to calculate ballistic trajectories, but the most famous was created to crack the German Enigma machines. This was the secret work carried out in the 1940s at Bletchley Park, with the mathematician Alan Turing at the helm.

Turing cuts a romantic figure: the father of computation—both in theory and practice—a war hero, and a tragic victim of early 20th-century prejudice. Tried for homosexuality, he was condemned to chemical castration, and died in what was likely suicide, by a cyanide-laced apple. The image has taken on a symbolic resonance, evoking both biblical knowledge and, for some, the iconic logo of Apple Computers.

Back in 1936, Turing—then a young mathematician at Cambridge—set out to answer a deceptively simple question posed by David Hilbert (whom we met earlier in the section on cardinal numbers) and Wilhelm Ackermann. The question had a fancy-sounding name: the Entscheidungsproblem, or decision problem. Hilbert and company were on a quest to create a fully formalized mathematics. As we’ve already seen, this was a project doomed to failure—thanks (or due) to Gödel’s Incompleteness Theorems.

A positive answer to the Entscheidungsproblem would have meant that there exists a mechanical procedure—an algorithm—capable of checking whether any given logical statement (in first-order logic) is provable from a set of axioms. If such a method existed, mathematics could, in principle, be entirely automated.

That same year, Turing proved that the answer was no. His result came around the same time as one by the American logician Alonzo Church, and the two shared credit for the discovery. But it was Turing’s approach—conceptually elegant and more intuitive—that gave birth to one of the most important ideas in modern mathematics and computer science: the Turing Machine. About its details we will talk about in our next entry.

Computer: sinister cybernetic device that delivers me dopamine hits like a blackjack table